LongTextBench and GenEval: Reading ERNIE-Image's Scores

ERNIE-Image posts 0.9733 on LongTextBench and 0.8728 on GenEval with enhancer. Decode what both numbers measure and what they predict for your real generations.

You saw ERNIE-Image's benchmark card and two numbers stood out: 0.9733 LongTextBench and 0.8728 GenEval. Those are the two scores that separate ERNIE-Image from Flux, SDXL, and Ideogram on the jobs where it wins. This post walks through what each benchmark actually tests, why the numbers are high, and what they predict for the generations you ship.

What LongTextBench measures

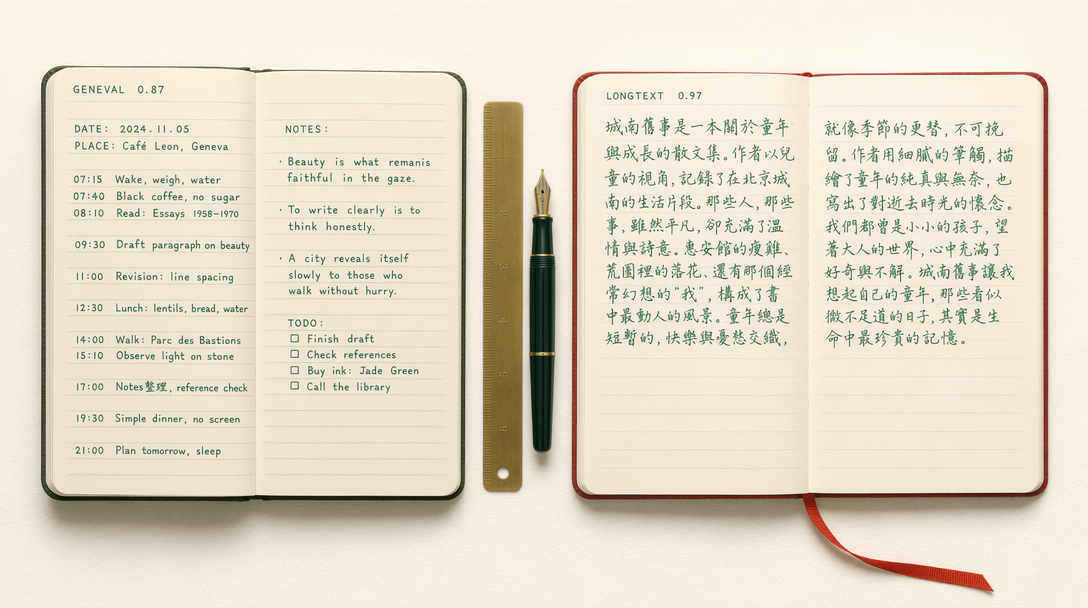

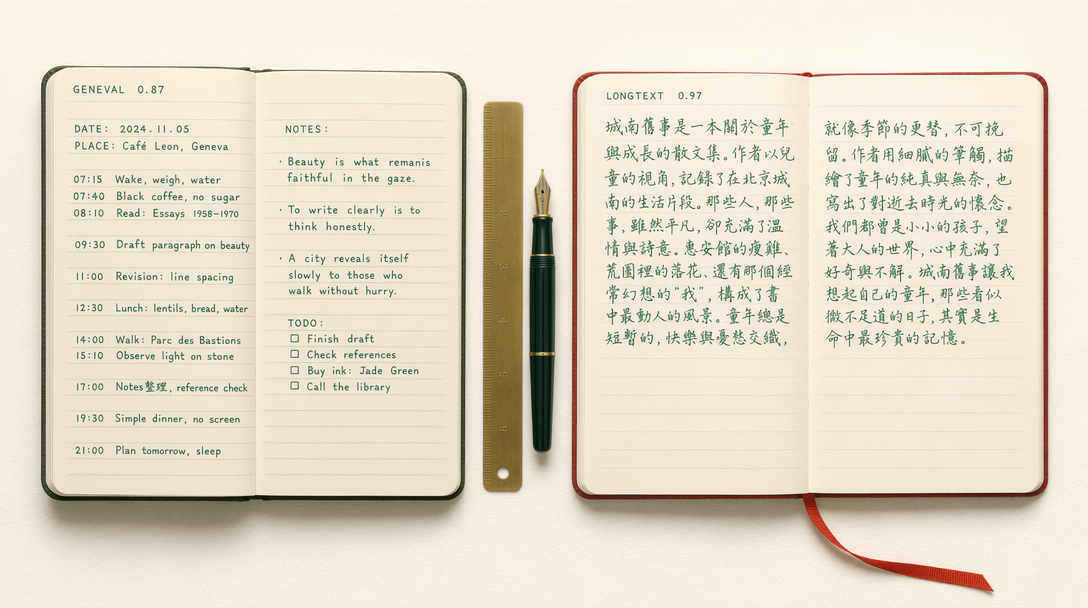

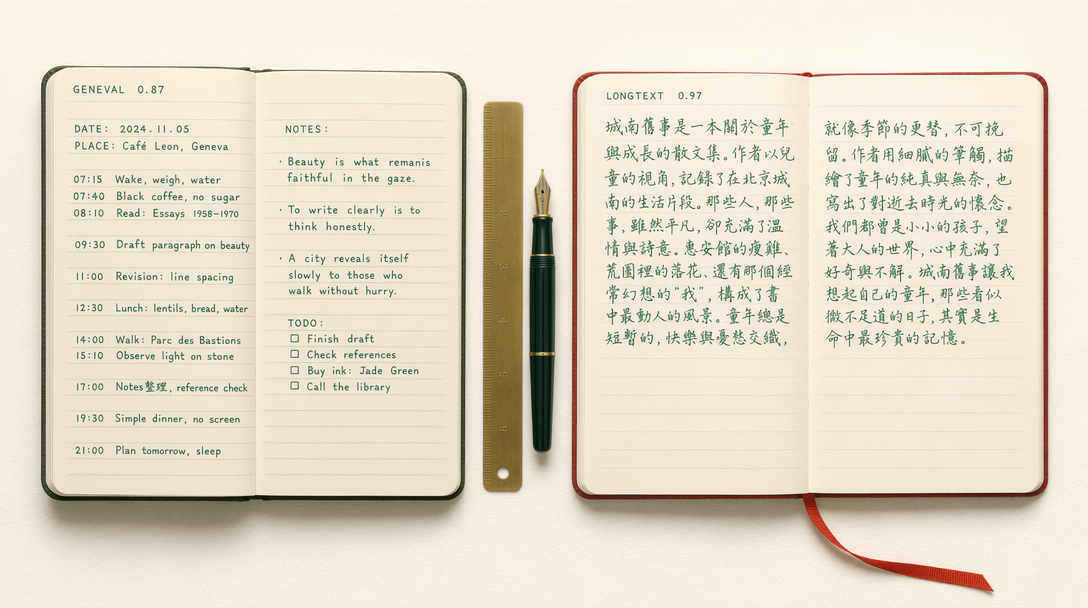

LongTextBench is a text rendering benchmark. Each prompt asks the model to produce an image containing a specific passage of text, often multi-line, often in a realistic context like a sign, a poster, a product label, or a document. The scorer then reads the rendered image with OCR and compares the recovered string against the target.

The score is a similarity metric bounded at 1.0. A score of 0.9733 means the OCR recovered the exact target text in the vast majority of generations, with minor character-level drops on the rest. For context, earlier diffusion models would sit between 0.20 and 0.55 on this kind of test because they learned to paint shapes that looked like text without encoding the actual letters.

What pushed ERNIE-Image here is a dedicated typography head fed by a character-aware tokenizer. The model does not just pattern match on letter shapes, it places glyphs. That is the difference between "generate something that looks like it says sale" and "generate an image that contains the string Sale 25% Off on row 2 of the poster."

What GenEval measures

GenEval is a compositional benchmark. It scores six axes: single object, two objects, counting, colors, position, and color attribution. The grader is a trained detector. Each prompt has a deterministic correctness check, for example "a red cube and two blue spheres" requires the detector to find exactly one red cube and exactly two blue spheres in the image.

0.8728 with the enhancer on is strong. Flux.1 sits around 0.67 on the same protocol, SDXL around 0.55. What drives the ERNIE-Image score up is the counting and color attribution axes, which are the axes most models collapse on. ERNIE-Image handles "exactly three" and "a red chair to the left of a green table" more reliably than the open models.

The enhancer is worth a note. ERNIE-Image ships with a prompt enhancer that rewrites your short prompt into a richer description before generation. Benchmark scores are reported with and without it. The 0.8728 is the enhancer-on number, which is the mode you would use in production. Without the enhancer the GenEval score drops closer to 0.80, which is still above Flux.

What these numbers predict in practice

For typography-heavy work, LongTextBench 0.97 means you can write a prompt like "a storefront poster reading Weekend Market April 19 to April 26, with subtext saying Free Samples Inside" and get back a poster where that exact text appears, correctly spelled, on the correct lines. You do not need a separate text overlay step. For e-commerce creative, event posters, and social cards this removes an entire production lane.

For compositional work, GenEval 0.87 means your counting and positioning prompts land more often. If you have a product catalog that specifies "two bottles on a wooden tray with a folded towel to the right," ERNIE-Image will resolve the spatial constraints more reliably than the open alternatives. You will still need to regenerate a fraction of the time, but the hit rate is high enough to feed automated pipelines without a manual review gate on every frame.

The two OneIG numbers, 0.5750 OneIG-EN and 0.5543 OneIG-ZH, give you the coarser quality read. OneIG is a holistic image generation benchmark covering realism, prompt adherence, and aesthetic quality. 0.57 on the English side is competitive with frontier open models. The Chinese score at 0.5543 is the key distinguishing number, since most other image models have little Chinese benchmark coverage.

Where ERNIE-Image still trails

Flux.1 and SDXL still hold edges on pure aesthetic quality when text is not part of the job. Midjourney-style hero shots, highly stylized illustration, and long-tail artistic prompts often still look better on Flux. Ideogram is the closest direct competitor on typography and in some typeface categories edges ahead on the short-text side.

GenEval does not capture fine texture, hand anatomy, or motion cues. The 0.87 tells you composition is solid, it does not tell you the hands are right.

Running a benchmark-style call

Here is a call against the fal endpoint that mirrors a LongTextBench prompt. You can swap the prompt and run it repeatedly against your own acceptance check.

1import { fal } from "@fal-ai/client";23fal.config({ credentials: process.env.FAL_KEY });45const result = await fal.subscribe("fal-ai/ernie-image", {6 input: {7 prompt: "A vintage bookshop window display with a hand lettered sign reading Rare Editions Since 1952, warm tungsten light, shallow depth of field",8 image_size: "landscape_16_9",9 num_inference_steps: 30,10 enable_enhancer: true,11 seed: 4212 },13 logs: true14});1516console.log(result.data.images[0].url);

For the turbo tier swap fal-ai/ernie-image for fal-ai/ernie-image/turbo at $0.01 per megapixel versus $0.03. The turbo head trades a small amount of quality and typography fidelity for a much faster and cheaper render, which is the right tradeoff for draft mode or bulk throughput.

The short read

LongTextBench 0.9733 means typography works. GenEval 0.8728 means composition works. Neither number tells you whether the image will feel right for your brand, which is still a taste call. Use the scores to decide when to reach for ERNIE-Image versus a Flux-class alternative, and reserve the subjective tier for the work where aesthetics dominate.