Baidu Qianfan vs fal Endpoints: When to Use Each

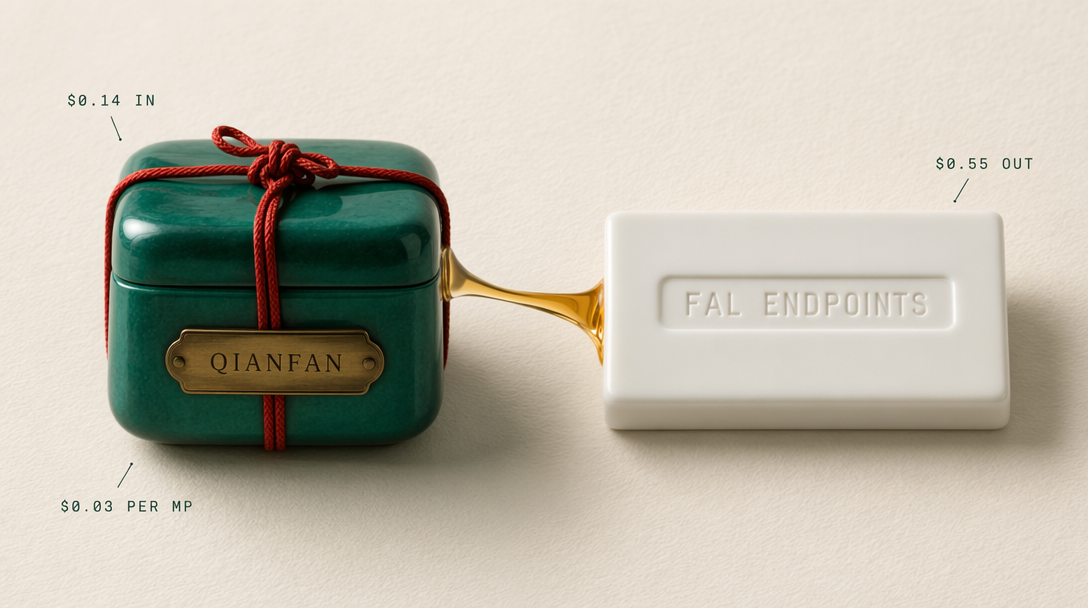

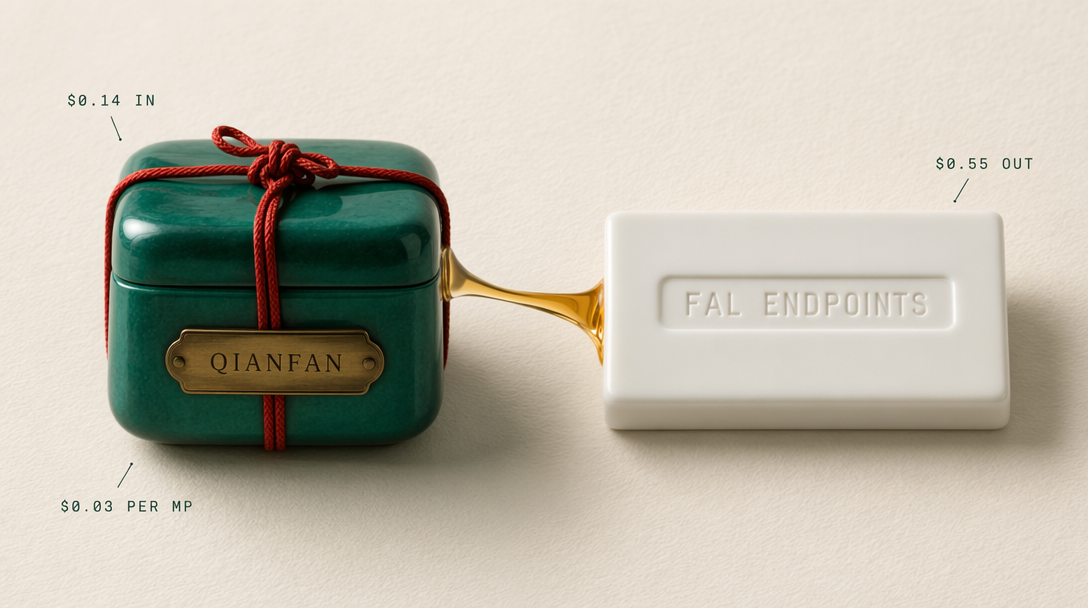

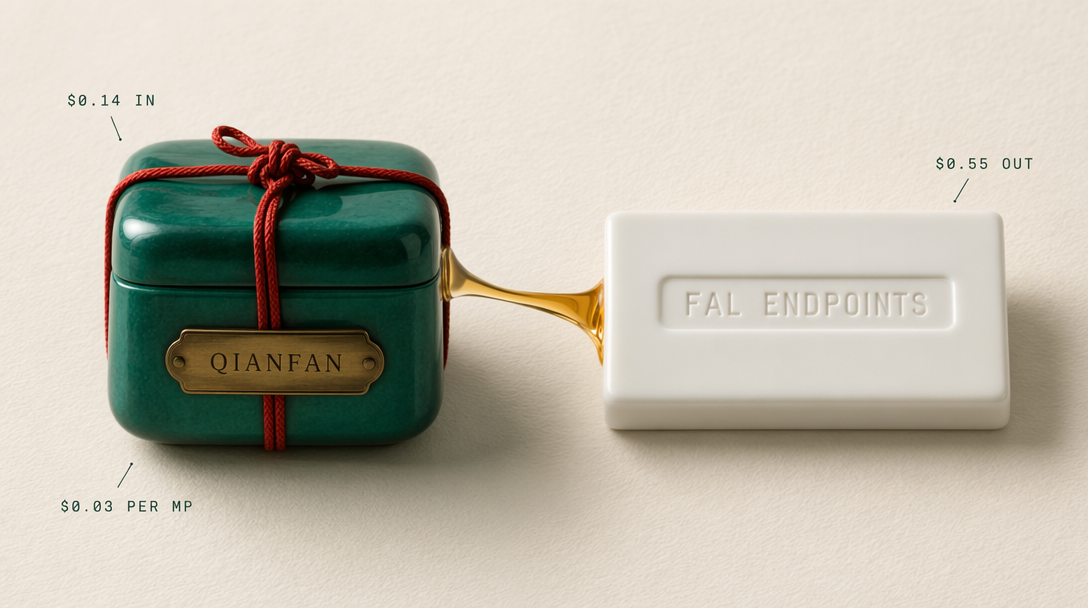

The ERNIE family splits across two platforms. Qianfan serves ERNIE 5.0 text and multimodal from China. fal serves ERNIE-Image globally. Here is the exact routing call.

If you want to ship with the ERNIE family, you will be integrating two platforms, not one. Baidu Qianfan owns the ERNIE 5.0 text and multimodal chat surface. fal owns the ERNIE-Image generation endpoint and its turbo variant. This post walks you through which one to reach for per workload, what the auth model looks like, where region latency kicks in, and how to keep the integration clean.

The split, in one line

Qianfan handles language and multimodal reasoning with ERNIE 5.0. fal handles image generation with ERNIE-Image. There is overlap at the edges, but for a production product you keep the two lanes separate and route per job.

Qianfan pricing for ERNIE 5.0 sits around $0.60 per 1M input tokens and $2.10 per 1M output tokens. That puts ERNIE 5.0 meaningfully under GPT-5 and Claude Opus 4.7 list pricing, so if your workload has heavy Chinese NLP traffic the unit economics are attractive.

fal pricing for ERNIE-Image is per megapixel. Standard runs $0.03 per MP, turbo runs $0.01 per MP. A 1600x900 render at standard is 1.44 MP, which works out to roughly $0.043 per cover image. For draft or thumbnail work the turbo tier at $0.014 per 1600x900 frame is the right default.

Auth model on each side

Qianfan uses OAuth style API keys tied to your Baidu Cloud account. You generate an API key in the Qianfan console, store it as an environment variable, and send it as a Bearer token on each request. The OpenAI-compatible wrapper accepts the same header format, which means you can point your existing OpenAI SDK at the Qianfan base URL and swap model names.

fal uses a single FAL_KEY issued from your fal dashboard. The @fal-ai/client SDK reads it from the environment or accepts a credentials field in fal.config. Queue polling, webhooks, and streaming all flow through the same key.

Both platforms support server-side only key use. Never ship either key to a browser. If you have a client that needs to kick off generation, proxy through your backend or use fal's server-side proxy helper for the fal side.

Region latency is the quiet gotcha

Qianfan is hosted in mainland China. From North American or European backends you will see 200 to 400 ms of round-trip latency on top of generation time. That is fine for background jobs, transcription pipelines, or non-interactive summarization. It is rough for a chat UI where first-token latency matters.

If your users are in China, Qianfan is the fastest ERNIE 5.0 you can get and the latency picture flips in your favor. If your users are elsewhere, budget the extra round trip or pair Qianfan with streaming so the perceived latency lines up with the first token rather than the full response.

fal routes globally with edge-aware scheduling. You will usually see sub 200 ms request overhead from any region, with generation time dominating. For the ERNIE-Image endpoint a standard 1024x1024 render lands in roughly 6 to 12 seconds, turbo lands in 2 to 4.

Calling Qianfan from the OpenAI-compatible wrapper

This is the shape you want. The endpoint follows the OpenAI Chat Completions schema, so your client code stays portable between frontier providers and ERNIE 5.0.

1curl https://qianfan.baidubce.com/v2/chat/completions \2 -H "Authorization: Bearer $QIANFAN_API_KEY" \3 -H "Content-Type: application/json" \4 -d '{5 "model": "ernie-5.0",6 "messages": [7 {"role": "system", "content": "You summarize Chinese legal filings in English."},8 {"role": "user", "content": "Summarize the key liabilities in the attached document in five bullets."}9 ],10 "temperature": 0.3,11 "max_tokens": 800,12 "stream": false13 }'

Add "stream": true and switch to a streaming reader on your side for chat UIs. The event shape matches OpenAI's SSE format, so any library that parses OpenAI streams will parse this.

Calling fal for ERNIE-Image

The fal side uses the typed client. The queue model is the default, which means you submit, poll, and retrieve. The SDK hides that with subscribe, which streams progress and returns the final payload.

1import { fal } from "@fal-ai/client";23fal.config({ credentials: process.env.FAL_KEY });45async function renderPoster(prompt) {6 const result = await fal.subscribe("fal-ai/ernie-image/turbo", {7 input: {8 prompt,9 image_size: "landscape_16_9",10 num_inference_steps: 24,11 enable_enhancer: true12 },13 logs: true,14 onQueueUpdate: (update) => {15 if (update.status === "IN_PROGRESS") {16 console.log("rendering...");17 }18 }19 });2021 return result.data.images[0].url;22}

Swap fal-ai/ernie-image/turbo for fal-ai/ernie-image when you need the standard tier's typography and composition fidelity. Most production surfaces use turbo for thumbnails and standard for hero placements.

When to call which

Reach for Qianfan when:

- Your users are in China and you want the lowest latency ERNIE 5.0.

- You need Chinese language reasoning or document synthesis.

- You want the OpenAI-compat wrapper so your existing clients work unchanged.

- Your workload is text or structured output, not image generation.

Reach for fal when:

- You need ERNIE-Image for typography-heavy or compositional generation.

- You want global edge routing rather than a single region.

- You are already integrated with the fal client for other image or video endpoints and want a single queue layer.

- You want turbo pricing for bulk draft work.

The clean integration

Keep two thin clients, one per platform, each with its own retry and timeout policy. Expose a single internal interface to the rest of your code that dispatches on task type. Text and multimodal chat lands on the Qianfan client. Image generation lands on the fal client. Share your observability layer, since both platforms report structured latency and status fields that slot into the same metrics pipeline.

That is the whole pattern. Two platforms, two keys, one router, and a consistent interface on top. Pick the lane per workload, and you get the best of both sides of the ERNIE family without paying the tax of running everything through one surface.