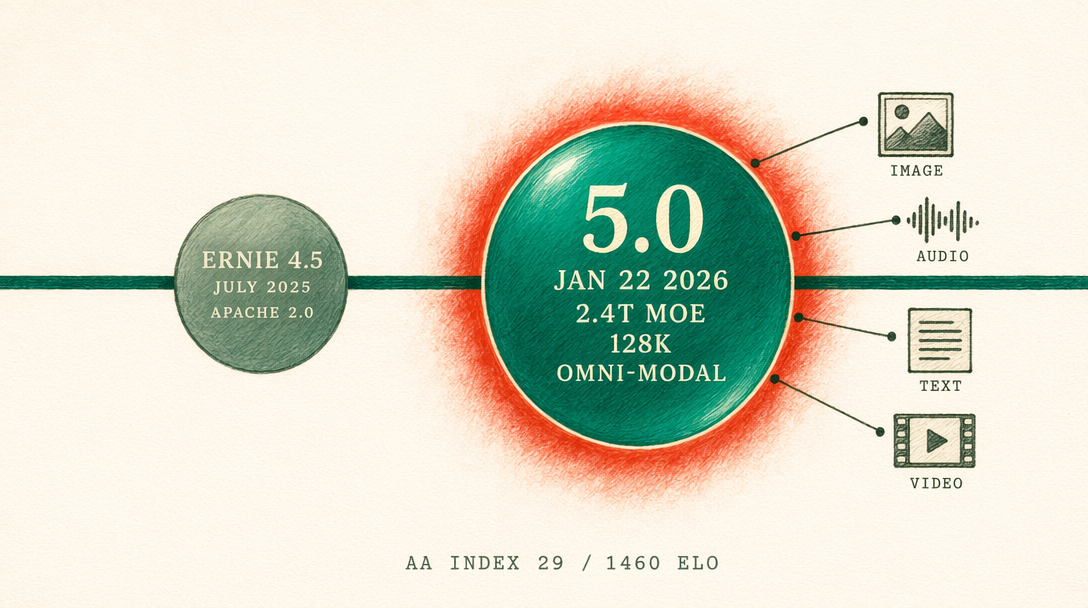

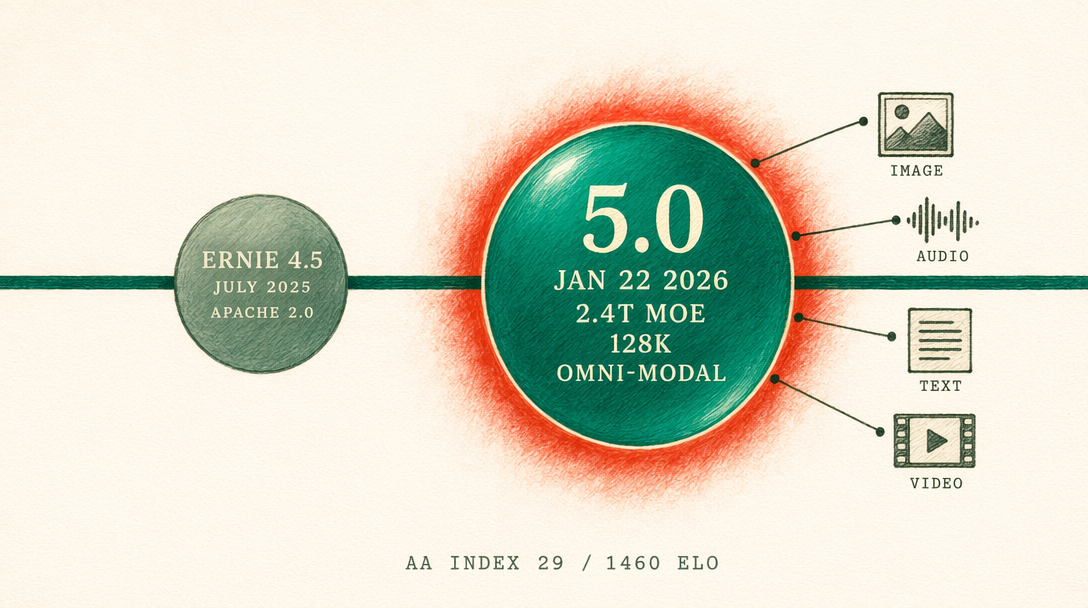

ERNIE 5.0 vs 4.5: The Omni-Modal Leap

ERNIE 5.0 landed on January 22, 2026 with a 2.4T MoE architecture and native omni-modal IO. Here is what changed versus 4.5 Turbo, and when the older line still earns its keep.

Baidu pushed ERNIE 5.0 to formal release on January 22, 2026, about ten weeks after the November preview. The headline numbers are a 2.4 trillion parameter mixture of experts with under 3% of weights active per inference, a 128K token context window, and native omni-modal input and output across text, image, audio, and video. ERNIE 5.0 scored 1460 Elo on LMArena as of January 15, 2026, which puts it first among Chinese models and eighth globally. AA Intelligence Index has the 5.0 Thinking Preview at 29 versus a 57 frontier set by Opus 4.7 and Gemini 3.1 Pro, so you are still looking at a roughly half-of-frontier reasoning tier at a domestic price point.

The 4.5 line is not retired. It stayed in the portfolio because it covers different work. 4.5 Turbo runs at roughly $0.14 per million input tokens and $0.55 per million output on Baidu Qianfan, versus $0.60 in and $2.10 out for ERNIE 5.0. If you are feeding a high volume pipeline that pulls in Chinese legal filings or product catalogs and spits out classification labels, the 4x cost delta on input matters more than the reasoning gap.

What native omni-modal actually means

ERNIE 4.5 solved cross modal work by chaining tools. You sent text to the LLM, called a separate image model for rendering, piped audio through a TTS endpoint, and stitched the outputs back together in application code. Each hop added latency, and the model never actually saw the intermediate artifacts as peer tokens.

5.0 treats text, image patches, audio spectrograms, and video frames as a single token stream. You can paste a meeting recording and ask for a Chinese summary plus a slide deck in one call. The model understands that an image in the prompt and a paragraph of text live in the same attention window. You stop paying for three round trips when one will do.

The practical consequence is that workflows that used to need a custom orchestrator now collapse into one request. A customer service app that reads a screenshot of a crashed checkout page, listens to a 30 second voice memo from the customer, and drafts a reply in Simplified Chinese is a single prompt on 5.0. On 4.5 Turbo, you needed at least three calls and a state machine.

When 4.5 is still the right answer

The open weight range from July 2025 covers 0.3B to 424B parameters, all under an Apache compatible terms. You can finetune 4.5 on your own GPUs. ERNIE 5.0 is Qianfan only at launch, and there is no public signal about weights. If you need on-prem or you need a small model you can ship on a single card, 4.5 is where you look.

The fal.ai story

The ERNIE family on fal.ai today is image only: fal-ai/ernie-image at $0.03 per megapixel and fal-ai/ernie-image/turbo at $0.01 per megapixel, plus LoRA variants and the trainer. There is no fal endpoint for ERNIE 5.0 text or video yet. For text generation you go direct to Baidu Qianfan. Here is the image call you would pair with a Qianfan text call if you are building a text plus cover illustration pipeline:

1import { fal } from '@fal-ai/client';23fal.config({ credentials: process.env.FAL_KEY });45const cover = await fal.subscribe('fal-ai/ernie-image', {6 input: {7 prompt: 'A minimalist editorial poster in Simplified Chinese about the January 2026 release of a large language model, deep teal and emerald palette, bilingual heading',8 image_size: 'landscape_16_9',9 num_inference_steps: 50,10 enable_prompt_enhancer: true,11 },12 logs: true,13});1415console.log(cover.data.images[0].url);

Pair that with a Qianfan call to ernie-5.0 for the article body and you have the full pipeline without leaving the Baidu stack for reasoning and without leaving fal for imagery.

Cost math on a 10K request day

Assume 4K input and 1K output tokens per request, 10,000 requests daily. On 4.5 Turbo you burn 40M input at $0.14 per million and 10M output at $0.55 per million, which is $11.10 per day. On ERNIE 5.0 the same volume is $45.00 per day. You are paying roughly 4x for the omni-modal and reasoning upgrade. If only a quarter of those requests truly need the upgrade, route by default: 4.5 Turbo for everything, 5.0 on an explicit flag.

Migration checklist

Before flipping a production workload to 5.0, ask four questions. Does the request need cross modal input? Does the output quality gap justify the 4x cost? Are you within the 128K context budget? Do you have a fallback to 4.5 Turbo if Qianfan has a region outage? If any answer is no, keep the request on 4.5.